Most values cluster around a central region, with values tapering off as they go further away from the center. In normal distributions, data is symmetrically distributed with no skew.

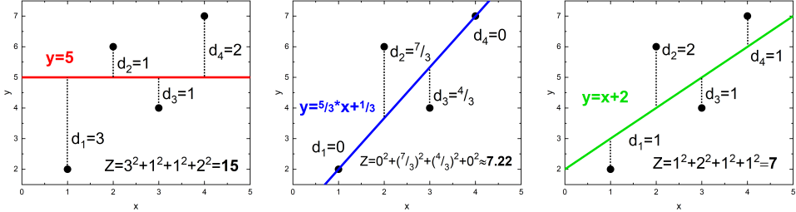

Standard deviation is a useful measure of spread for normal distributions. Frequently asked questions about standard deviation.Why is standard deviation a useful measure of variability?.Steps for calculating the standard deviation by hand.Standard deviation formulas for populations and samples.This definition for a known, computed quantity differs from the above definition for the computed MSE of a predictor, in that a different denominator is used. The term mean squared error is sometimes used to refer to the unbiased estimate of error variance: the residual sum of squares divided by the number of degrees of freedom. One example of a linear regression using this method is the least squares method-which evaluates appropriateness of linear regression model to model bivariate dataset, but whose limitation is related to known distribution of the data. To minimize MSE, the model could be more accurate, which would mean the model is closer to actual data. The squaring is critical to reduce the complexity with negative signs. The mean of the distance from each point to the predicted regression model can be calculated, and shown as the mean squared error.

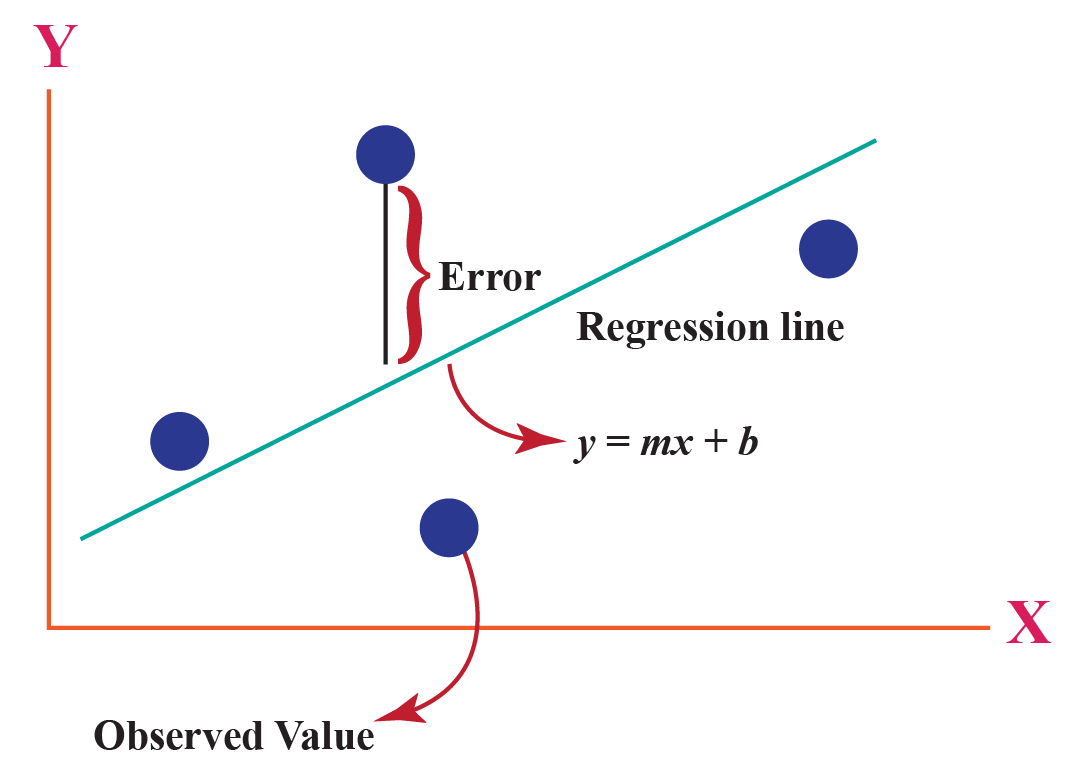

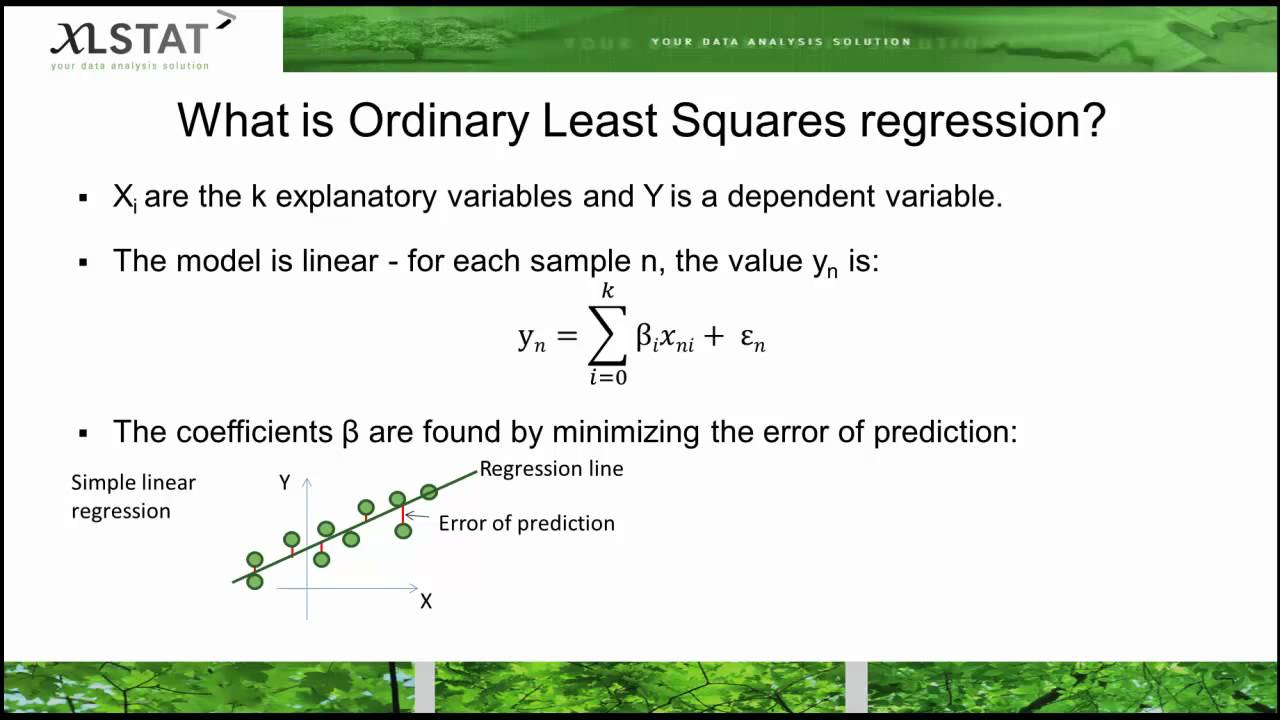

In regression analysis, plotting is a more natural way to view the overall trend of the whole data. If a vector of n, we haveįurther information: Reduced chi-squared statistic The definition of an MSE differs according to whether one is describing a predictor or an estimator. In the context of prediction, understanding the prediction interval can also be useful as it provides a range within which a future observation will fall, with a certain probability. The MSE either assesses the quality of a predictor (i.e., a function mapping arbitrary inputs to a sample of values of some random variable), or of an estimator (i.e., a mathematical function mapping a sample of data to an estimate of a parameter of the population from which the data is sampled). In an analogy to standard deviation, taking the square root of MSE yields the root-mean-square error or root-mean-square deviation (RMSE or RMSD), which has the same units as the quantity being estimated for an unbiased estimator, the RMSE is the square root of the variance, known as the standard error. Like the variance, MSE has the same units of measurement as the square of the quantity being estimated. For an unbiased estimator, the MSE is the variance of the estimator. The MSE is the second moment (about the origin) of the error, and thus incorporates both the variance of the estimator (how widely spread the estimates are from one data sample to another) and its bias (how far off the average estimated value is from the true value). As it is derived from the square of Euclidean distance, it is always a positive value that decreases as the error approaches zero. The MSE is a measure of the quality of an estimator.

In machine learning, specifically empirical risk minimization, MSE may refer to the empirical risk (the average loss on an observed data set), as an estimate of the true MSE (the true risk: the average loss on the actual population distribution). The fact that MSE is almost always strictly positive (and not zero) is because of randomness or because the estimator does not account for information that could produce a more accurate estimate. MSE is a risk function, corresponding to the expected value of the squared error loss. In statistics, the mean squared error ( MSE) or mean squared deviation ( MSD) of an estimator (of a procedure for estimating an unobserved quantity) measures the average of the squares of the errors-that is, the average squared difference between the estimated values and the actual value. Not to be confused with Mean squared displacement.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed